What is chunking?

Chunking is a process of splitting a large document into smaller “chunks”. There are many reasons chunking is an important part of the Retrieval-Augmented Generation (RAG) ecosystem. But the most important reason is the context window size of the Large Language Models (LLMs). Document contents are sent to an LLM to help in extraction/reasoning tasks.

The contents of the documents are sent as context in a “prompt” to the LLM. The total amount of text which can be sent to an LLM is limited by its context size. So a very large document cannot be sent directly to most LLMs. Only the contextually relevant portion(s) of the document should be sent. The document needs to be chunked before the relevant chunks can be identified.

What is an LLM’s context size or window?

Large language models have what is called a context size or window. The context size of a large language model like GPT 4 refers to the maximum number of tokens (words and punctuation marks) the model can consider at one time as input.

This is also known as the model’s “window” or “attention span.” For instance, the GPT 3.5 Turbo model has a context size of 4,096 tokens. This means that the model (GPT 3.5 turbo) can take into account up to 4,096 tokens of text to process/generate text. This reference page from OpenAI lists all the context window sizes for their various models. Also, it is important to remember that in general, you are charged by the number of tokens an LLM processes. So, there is a cost implication. LLM processing speed (latency) is determined in tokens/second. That’s there as well.

Note: Around 1,000 English words is about 750 tokens, give or take.

Let’s assume you have 2 documents:

- Document A with 2,000 words (approximately 1,500 tokens)

- Document B with 15,000 words (approximately 11,250 tokens)

Document A will easily fit into the 4,096 token limit of GPT 3.5 turbo model. So we can pass the entire document as context along with a prompt to GPT 3.5 turbo and expect the model to have context on the whole document in one go.

But the scenario is different for Document B which has 11,250 tokens which won’t fit into the 4,096 token limit of GPT 3.5 turbo model. Here is where chunking comes into play. For Document A chunking is not required. But for Document B chunking is required. We will have to select a chunk size for handling Document B.

Pros and Cons of Chunking

| No Chunking | With Chunking | |

|---|---|---|

| Benefits | 1. The Context of the document is fully transferred to LLM 2. Leads to the best quality results 3. No Vector DB is involved 4. No Embedding is involved 5. No Retrieval engine is involved (we do not have to worry about the quality of embedding or chunk size strategies) 6. Almost always provides the best quality / most accurate results. | 1. Information from very large documents, that won’t fit into the context of an LLM as a whole can be extracted 2. Lower cost since only small chunks of the document are sent to the LLM for a single prompt. 3. Lower latency (faster result generation) since only part of the document is sent to the LLM |

| Drawbacks | 1. Does not work for large documents 2. Higher cost since entire document is sent to LLM for every field extraction 3. Higher latency (slower result generation) since the entire document is sent to the LLM irrespective of the field being extracted. | 1. Usage of Vector DB 2. Usage of Embeddings 3. Quality of retrieval (selecting the right chunks to send to LLM) is dependent on many factors: a) Selection of chunk size b)Selection of overlap c)Retrieval strategy used d)Quality of embedding e) Information density / distribution within a document 4. Requires iterative experimentation to arrive at the above settings |

Your Choice

If your documents are smaller than the context size of the LLM (Document A)

Choose not to chunk if your document’s text contents can fit into the context size of the selected LLM. This provides the best results. But if you have lots of extractions to be made from each document, the cost might increase significantly.

If your documents are larger than the context size of the LLM (Document B)

Chunking is necessary. There is no other option.

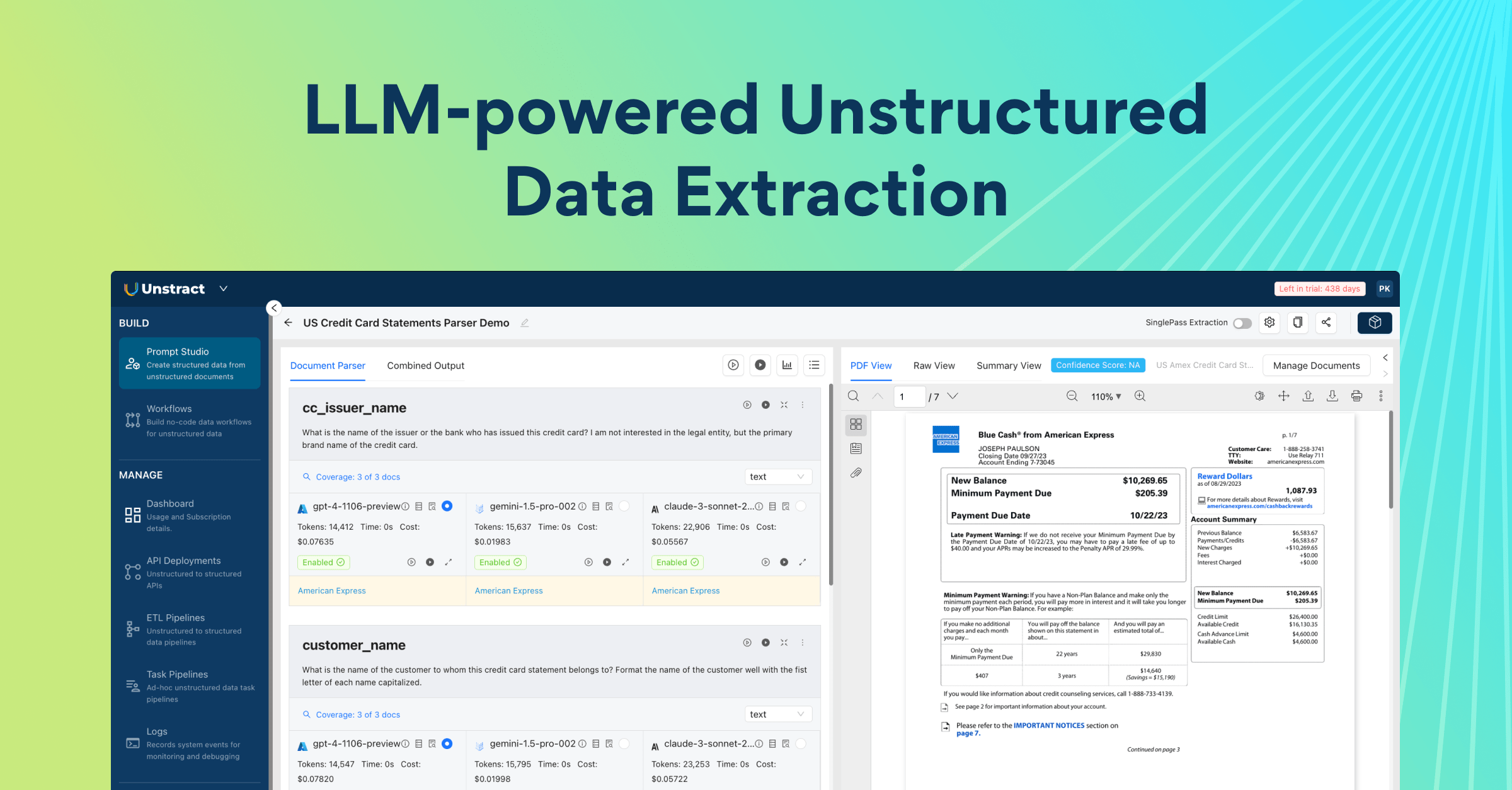

Our SaaS and Enterprise versions of Unstract have a couple features called Summarised Extraction and Single-Pass Extraction. With these options, you can still enjoy the power and ease of use of no chunking and at the same time keep costs low, without any effort on your side.

Popular LLMs and their limits on document sizes

From our experience, we see an average of 400 words per page for dense documents. Most documents have significantly lower than 400 words per page. For our calculations, let us assume that we will be dealing with documents with 500 words per page.

Note: 500 words per page is substantially on the higher end of the scale. Real world use cases, especially averaged over multiple pages will be much lower.

| LLM Model | Context Size | No Chunking (Max pages) | Requires Chunking if the document is.. |

|---|---|---|---|

| Llama | 2,048 (2K) | 5 Pages | > 5 Pages |

| Llama 2 | 4,096 (2K) | 10 Pages | > 10 Pages |

| GPT 3.5 Turbo | 4,096 (4K) | 10 Pages | > 10 Pages |

| GPT 4 | 8,192 (8K) | 20 Pages | > 20 Pages |

| Mistral 7B | 32,768 (32K) | 80 Pages | > 80 Pages |

| GPT 4 Turbo | 131,072 (128K) | 320 Pages | > 320 Pages |

| Gemini 1.5 Pro | 131,072 (128K) | 320 Pages | > 320 Pages |

| Claude 3 Sonnet | 204,800 (200K) | 500 Pages | > 500 Pages |

Cost Considerations for no chunking strategy

It is very inviting to use a no chunking strategy considering today’s leading models can handle 100+ pages. But you will also have to consider the cost implications during extraction.

For the sake of calculations, let’s assume that we are dealing with documents with 400 words per page. We see this as an average value for dense documents.

| LLM Model | Approx cost per page |

|---|---|

| GPT 4 | $0.0100 |

| GPT 4 Turbo | $0.0030 |

| Gemini 1.5 Pro | $0.0021 |

Also note that the table above is the price per page. In real world use cases, you might need to send the same page multiple times to the LLM when multiple information extraction is required.

Our SaaS and Enterprise versions of Unstract have a couple features called Summarised Extraction and Single-Pass Extraction. With these options, you can still enjoy the power and ease of use of no chunking and at the same time keep costs low, without any effort on your side.

Choosing chunk size and overlap

Choosing the right chunking size and overlap is crucial for optimising performance and retrieval quality.

Chunk Size

Each chunk of text is typically “embedded” and added to a vector database for retrieval. Embedding is the process in which the information in the chunk is converted into a “vector” which represents the information available in the chunk. The size of each chunk is important because it affects the density of information that will be available for embedding as vectors. A very small size can lead to not enough information being available and a very large size can dilute the information that will be embedded.

Overlap

Overlap between chunks ensures that information at the starting and ending of the chunk is not isolated contextually. An overlap helps in creating a seamless join of retrieved information.

Determining Chunk Size

Context size of the LLM: Consider the maximum context window of your LLM (e.g. 4,096 tokens for GPT 3.5 Turbo). When choosing smaller sizes, make sure that the chunk’s contents will be enough to provide meaningful content/context to the LLM.

Content type: Depending on the type of the text (e.g., technical documents, conversational transcripts), different chunk sizes may be optimal. Note that texts which are dense with information rich content might require smaller chunks. If there is “too much” information in each chunk, the vector DB, during the retrieval phase, might not return optimal chunks for a given query.

Retrieval requirements: Some retrieval strategies will retrieve the “top-k” number of chunks and pass it to the LLM. So for a ‘k’ value of 3, the db will retrieve 3 chunks needed to be passed to the LLM. All 3 chunks should fit in the LLM’s context size simultaneously.

Deciding on Overlap Size

The overlap should be big enough to maintain the context between chunks. Typically, an overlap of 10-20% of the chunk size is a good starting point. Too much overlap will dilute the information differentiation between two consecutive chunks. We should find a balance based on your specific application needs.

Experiment and Adjust

Start with a strategy based on the discussion above and then test the chunking and overlap in real world scenarios. Analyse the impact of the choices on retrieval quality and LLM model output correctness. Adjust chunk sizes and overlap based on the performance. This might require several iterations to optimise and find the best size and overlap for a given use case.

Resource Constraints

Large chunks and large overlaps can add stress on the compute resources. Make sure that the settings are sustainable based on cost and response latency requirements. If your application requires very low latency, you might need to optimise for fast retrieval and LLM evaluation at the potential cost of quality of retrieval.

You’ll find more pieces getting published on topics such as large language models (LLMs), Retrieval-augmented generation (RAG), vector databases, and other technologies in the AI stack. These insights are born from firsthand experience in crafting AI-powered solutions to tackle real-world business challenges.

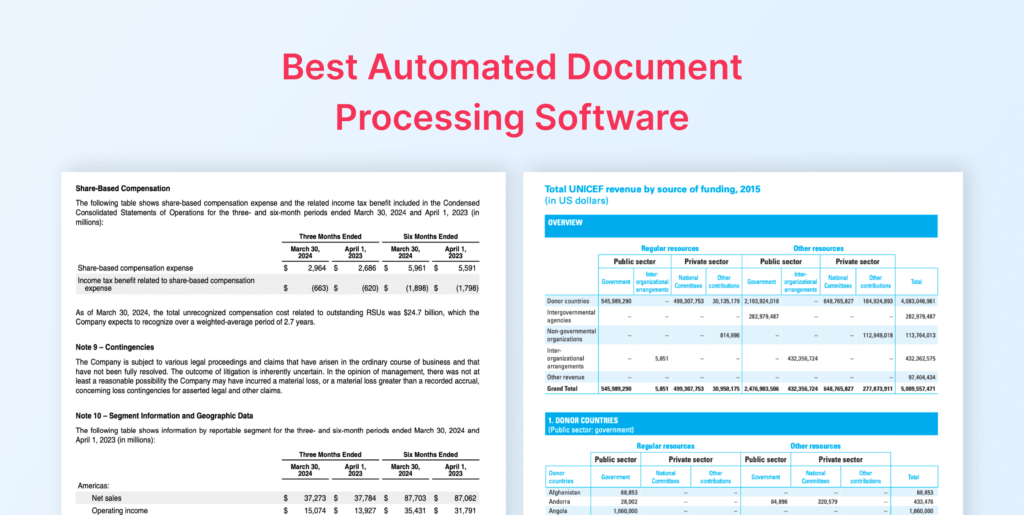

While you’re here, be sure to explore Unstract, our open-source, no-code LLM platform to launch APIs and ETL Pipelines to structure unstructured documents.