We use cookies to enhance your browsing experience. By clicking "Accept", you consent to our use of cookies. Read More.

A dual-LLM consensus engine ensures only accurate data makes it into your API, ETL and HITL workflows.

Two LLMs run your prompts in parallel: an extractor and a challenger. Get an output only if both agree—NULL if not. Configure any combination: OpenAI + Claude, Azure GPT + Vertex + more!

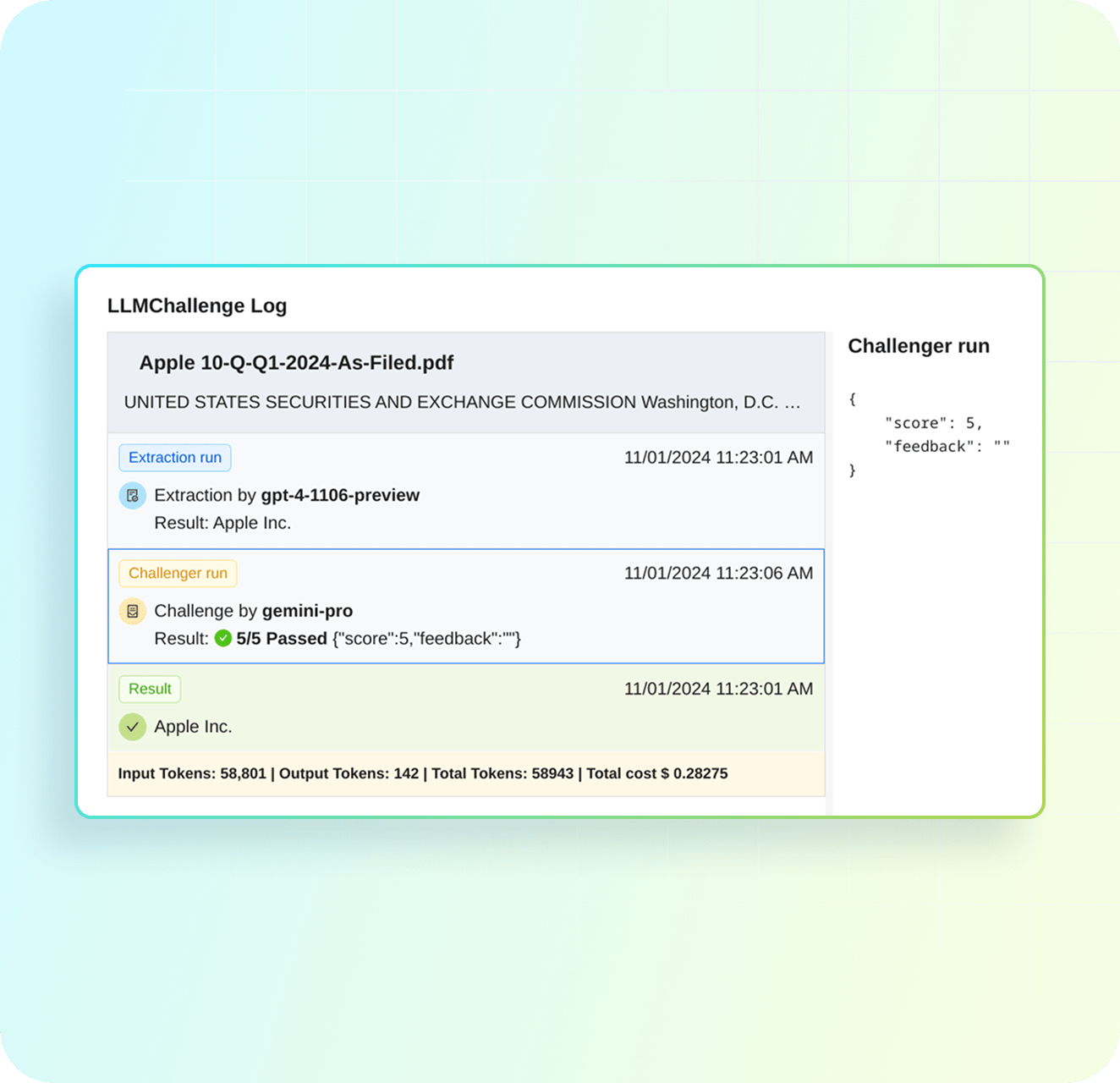

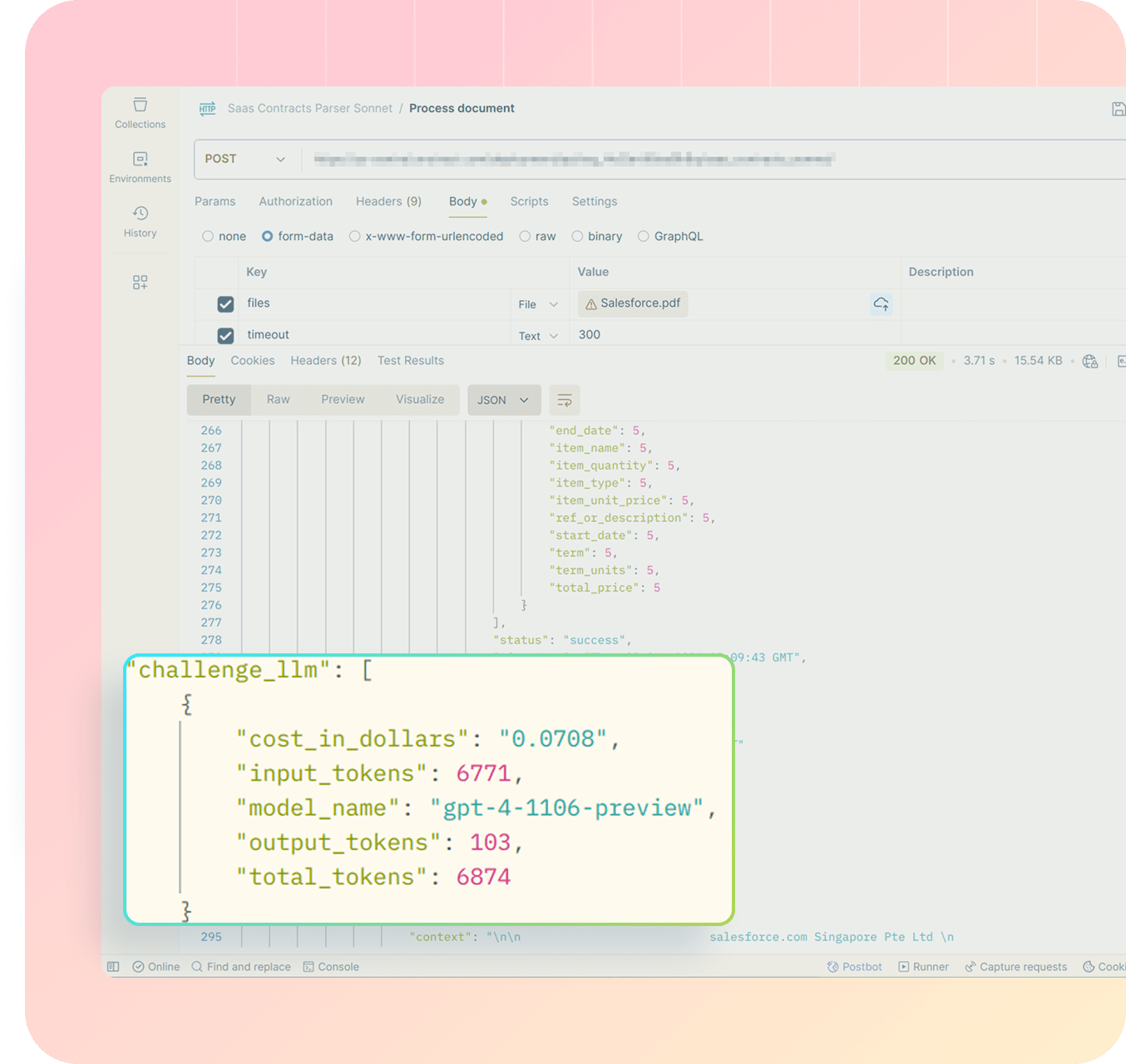

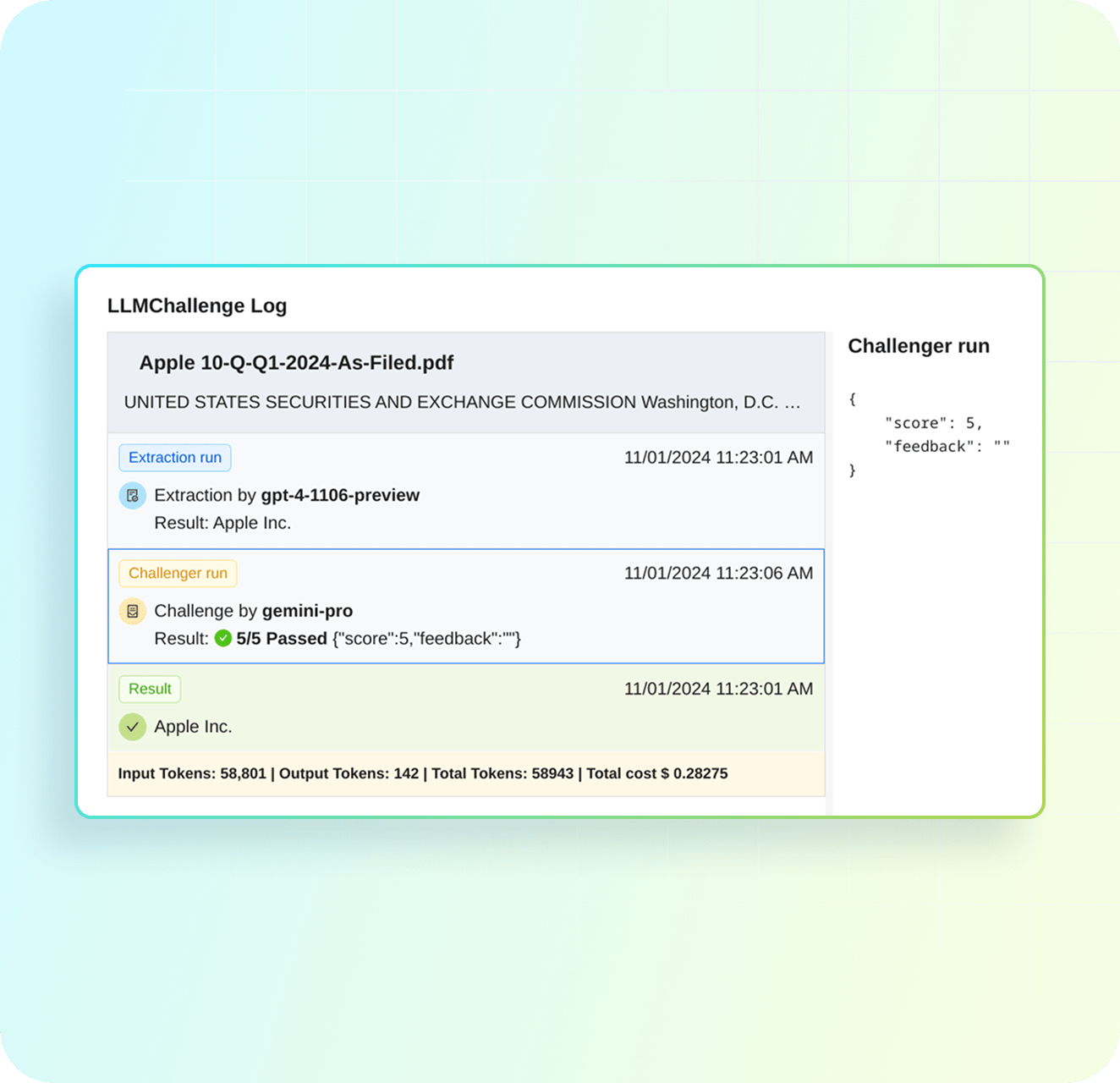

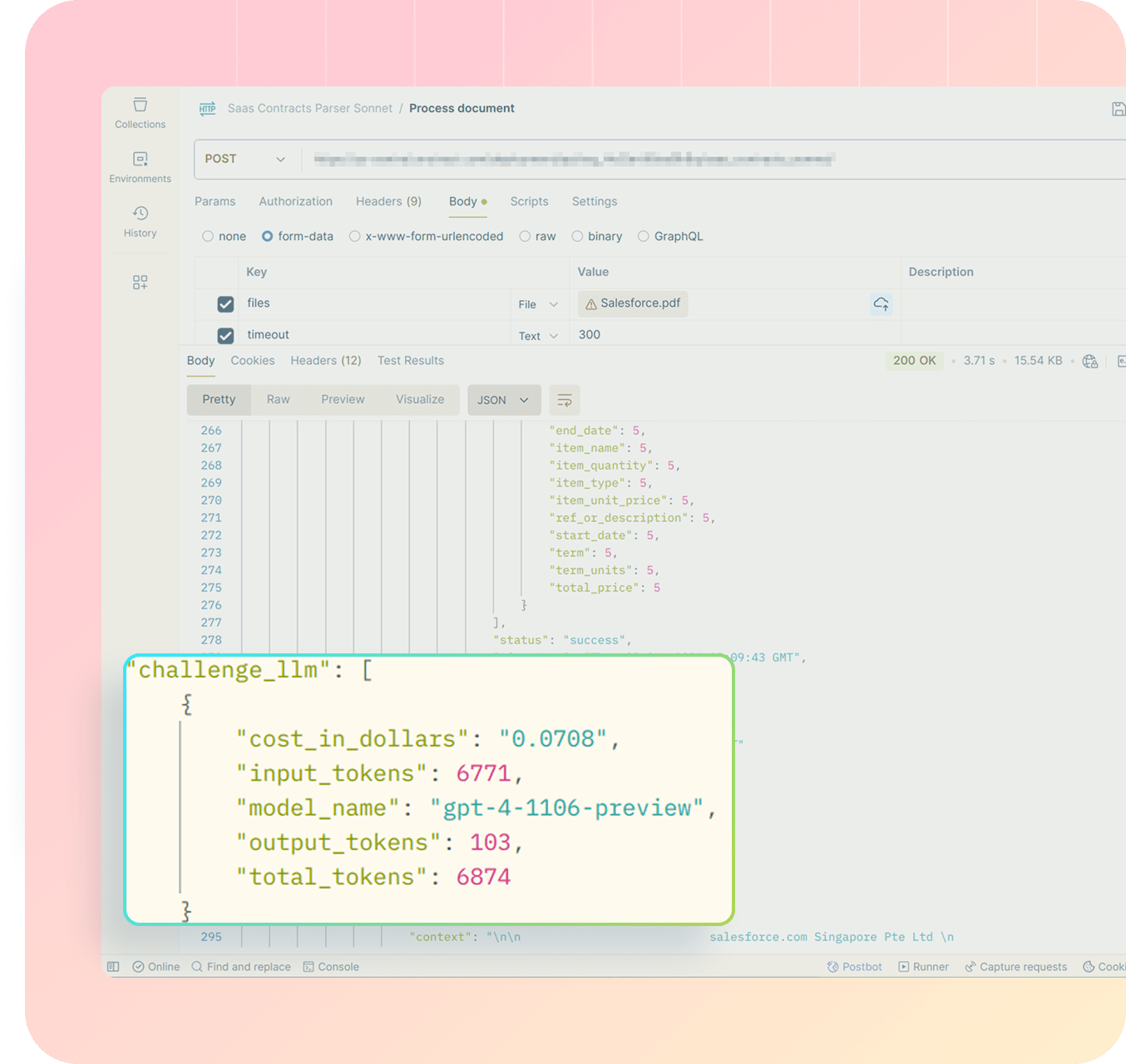

Get drilled-down insights by running the metadata for every extraction. Track token costs, view challenger confidence scores, or debug outputs after deployment.

CTO, Tokenstreet

Unstract lets us turn a wide range of document formats into clean, structured data with low integration effort and without compromising on enterprise-grade controls. Its high extraction accuracy paired with clear highlight-based validation speeds up our process a lot!

Production-Grade Data Integrity

Improved Automation Scaling

Reduced Manual Oversight

We recommend pairing LLMs from different providers. Popular combinations: OpenAI GPT-4 + Google Gemini Pro, Anthropic Claude + Cohere, OpenAI + Anthropic.

The field returns NULL.

Typically adds 2-5 seconds to extraction time. For financial and legal documents, accuracy trumps speed.

Technically yes, but you shouldn’t! Using the same model (or even models from the same provider) defeats the purpose because they tend to make similar mistakes. Provider diversity is key to catching different error patterns.

Important distinction: NULL means “we couldn’t reach consensus.” Empty means “we agreed this field has no value.”

Yes. The full conversation log is available via API for every extraction. You can see why the LLMs disagreed and the confidence score given by the challenger LLM.

We use cookies to enhance your browsing experience. By clicking "Accept", you consent to our use of cookies. Read More.